Moonshot AI’s new model from China scored 67 on the Artificial Analysis Intelligence Index, beating every open model and sitting just behind GPT-5. It was trained on 15.5 trillion tokens using a Mixture of Experts design that activates only part of its trillion parameters for each task. The result is a model that reasons better, uses tools effectively, and runs at a fraction of the cost.

Kimi K2’s reported training cost is about 4.6 million dollars. GPT-4 is estimated between 80 and 100 million, with GPT-5 likely higher. The gap exists because Chinese labs, restricted from NVIDIA’s top GPUs, had to optimize their software instead of scaling hardware. They built smarter routing systems, INT4 precision models, and efficient reinforcement pipelines that deliver frontier performance without frontier budgets.

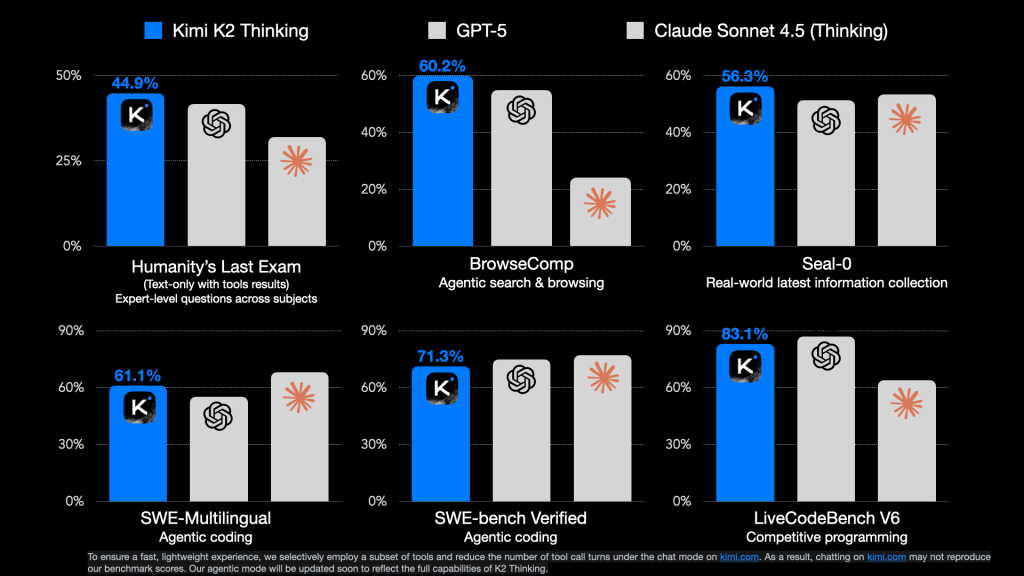

Early users say it “feels like GPT-4 with reasoning always on.” Benchmarks like Humanity’s Last Exam and τ²-Bench confirm stronger multi-step logic and tool use than any previous open model.

Meanwhile, OpenAI’s CFO recently mentioned a possible government “backstop” to help fund its next wave of data centers, a comment Sam Altman later denied. The conversation itself exposed how expensive the closed-model approach has become.

Moonshot AI went the other way. It released Kimi K2’s full weights under a permissive license, inviting developers worldwide to fine-tune and deploy. That single choice shifts power from billion-dollar data centers to open innovation.

Kimi K2 Thinking shows what happens when efficiency, openness, and strategy align. It might be the first real alternative to the closed frontier.

#KimiK2 #MoonshotAI #OpenWeights #GPT5 #ChinaAI #ReasoningModels #AgenticAI #TechStrategy #LLMs